The Next AI Breakthrough Is Old-Fashioned Software Engineering

Everyone’s chasing smarter AI. But the real breakthrough won’t be intelligence. It’ll be reliability.

Over the weekend, Andrej Karpathy’s conversation on the Dwarkesh pod made waves when he said AGI is still at least a decade away.

His reasoning came from experience. Years ago, the first self-driving car demos looked amazing. Cars gliding through cities, reacting to obstacles in real time. I also remember seeing those early GoogleX SUVs near the LinkedIn campus in 2013.

Yet a decade later, the work continues. In fact, I’m writing this inside a Waymo that just told me to get out in the middle of an intersection.

The progress has been less about demos and more about grinding out reliability and predictability.

We’re now in the same moment with AI agents.

The productivity unlocked in just the last couple of years is undeniable. I have so many go to prompts and agents helping me daily. I’m loving to code because of how fun and easy it has become. But the road from “impressive demo” and “babysitting your agent” to “production-grade reliability” requires hard, unglamorous engineering work.

It’s easy to make something work once. The hard part is making it work every time.

Reliability Is the Real Frontier

While the stakes with AI agents might not match those of autonomous vehicles, the underlying challenge is the same: reliability. Automating parts of your work or life still demands rigor. Companies can’t afford hallucinations in customer support, errors in financial workflows, or security breaches with MCP or AI browsers that get tricked into exposing your data.

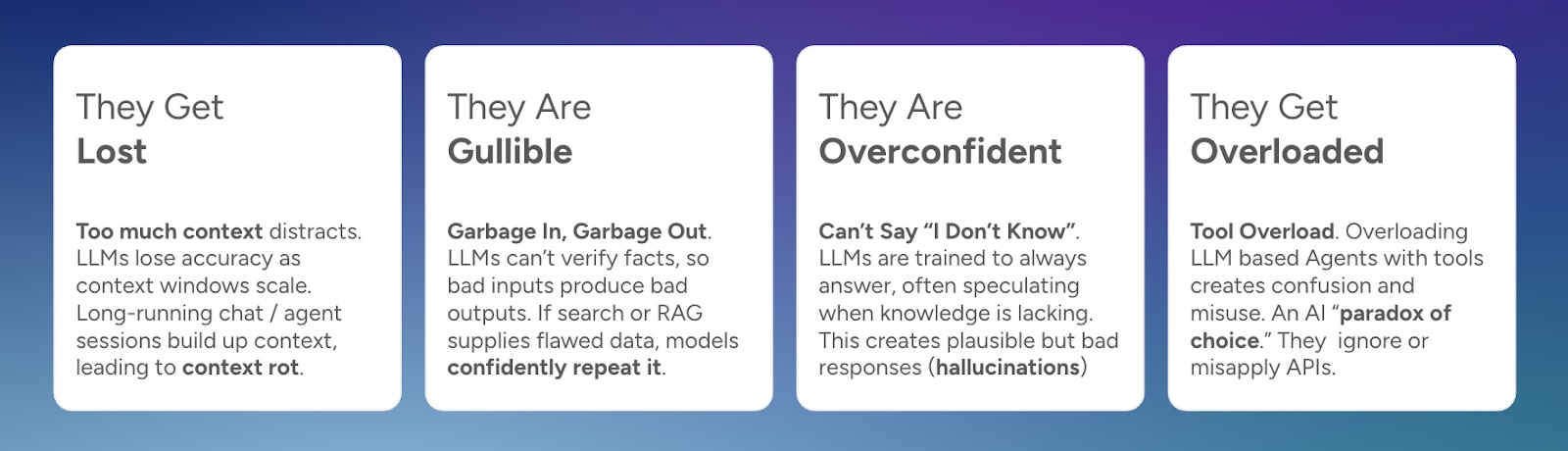

Most of today’s limitations trace back to the structural weaknesses of LLMs. Many have written about context rot, security vulnerabilities, or tool misuse. I’ve also explored this in The 4 Ways LLMs Fail where I break down how these weaknesses create real-world reliability issues.

AI still remains incredibly useful today for lower-stakes tasks and keeping a human in the loop, but the next wave of innovation will come from teams focused on high-stakes reliability. Building agents that don’t just “work sometimes,” but work nearly all the time.

As Karpathy said, it’s going to be a march of nines: going from 90% to 99.99% requires a lot more engineering effort, testing, and iteration.

Borrowing from Decades of Software Engineering

The good news is that we already have a playbook. The path to reliable AI will look a lot like the evolution of modern big data, web-scale, and distributed software systems. Many of the same ideas apply:

- Evals-driven development - validation loops and metrics for every model and retrieval pipelines, similar to test-driven development

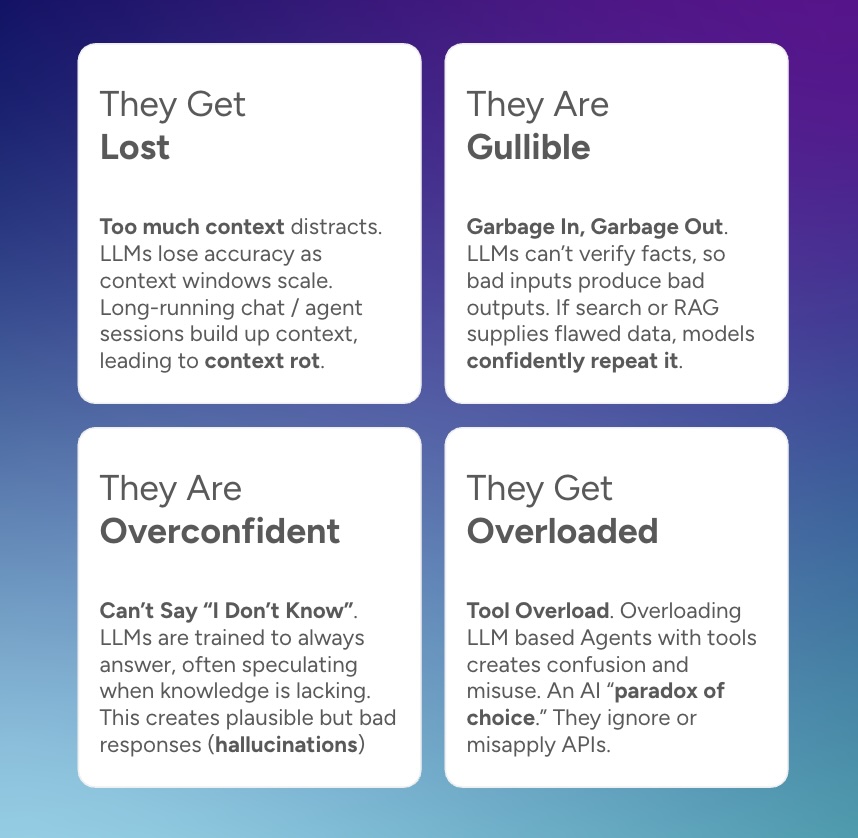

- Agentic orchestration patterns like planning, parallelization, and merging - reminiscent of service oriented call graphs or MapReduce.

- Sub-agent modular design - agents focused on narrow, well-defined tasks, like microservices.

- Context engineering - where we can pull out the most relevant context from memory or underlying search engines

- Query understanding and routing - sending tasks to the right models or tools.

- Reliability engineering - retries, tracing, observability, auto-healing infra.

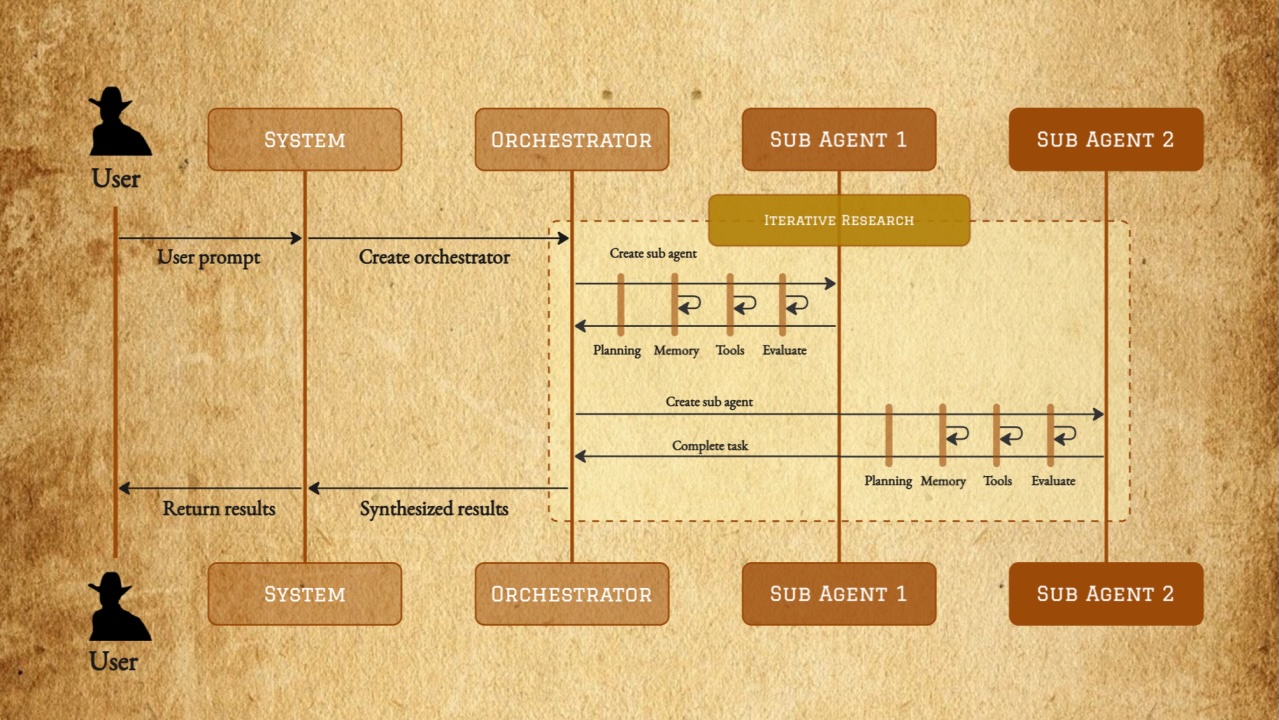

These aren’t new ideas. But they’re being rediscovered and reinvented in the context of AI systems. For example, here’s Anthropic defining a very familiar looking multi-agent architecture or famed ML researcher Andrew Ng introducing a MapReduce-style parallel agent approach.

A resilient service architecture is looking very similar to a resilient multi-agent architecture

The teams that bring that disciplined software engineering to AI will win the next decade.

The Paradox of Agent Workflows

AI agents excel when the task is deeply personal or open-ended. Essentially, the long tail of use cases.

But if you want to automate something your users do over and over again, building it the “old-fashioned way” might still be the right choice (today at least). Traditional software can guarantee the nines of reliability that agents don’t have yet.

Karpathy summed it up perfectly: “A lot of times, the value that I brought to the company was telling them not to use AI.”

The Next Breakthrough in AI? Software Engineering

We’re in a phase where agents are already unlocking big gains for coding, creative, or custom one-off tasks, especially in lower-stakes situations with clear evals and humans in the loop. But making them trustworthy and enterprise-grade will be a defining challenge of the coming years.

Sooner or later, we’ll be back to calling it “software engineering.” And there’s nothing wrong with that.

The good news is that AI has a lot of momentum and can unlock more and more use cases as it matures. And with increasing amount of autonomy (unlike say self-driving cars). Low-stakes today, medium-stakes tomorrow, highest-stakes in the future.

And the work to address this has already begun.

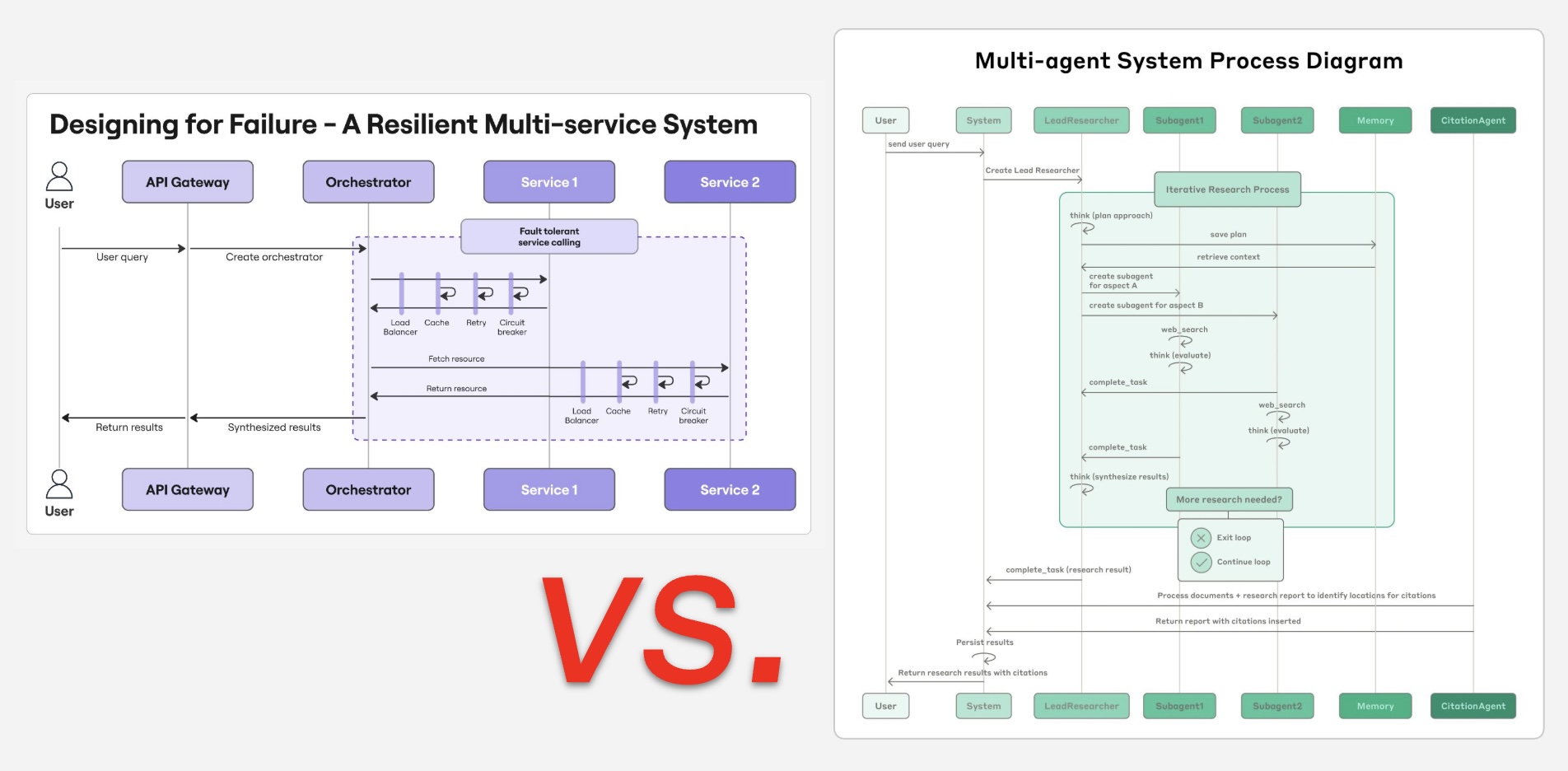

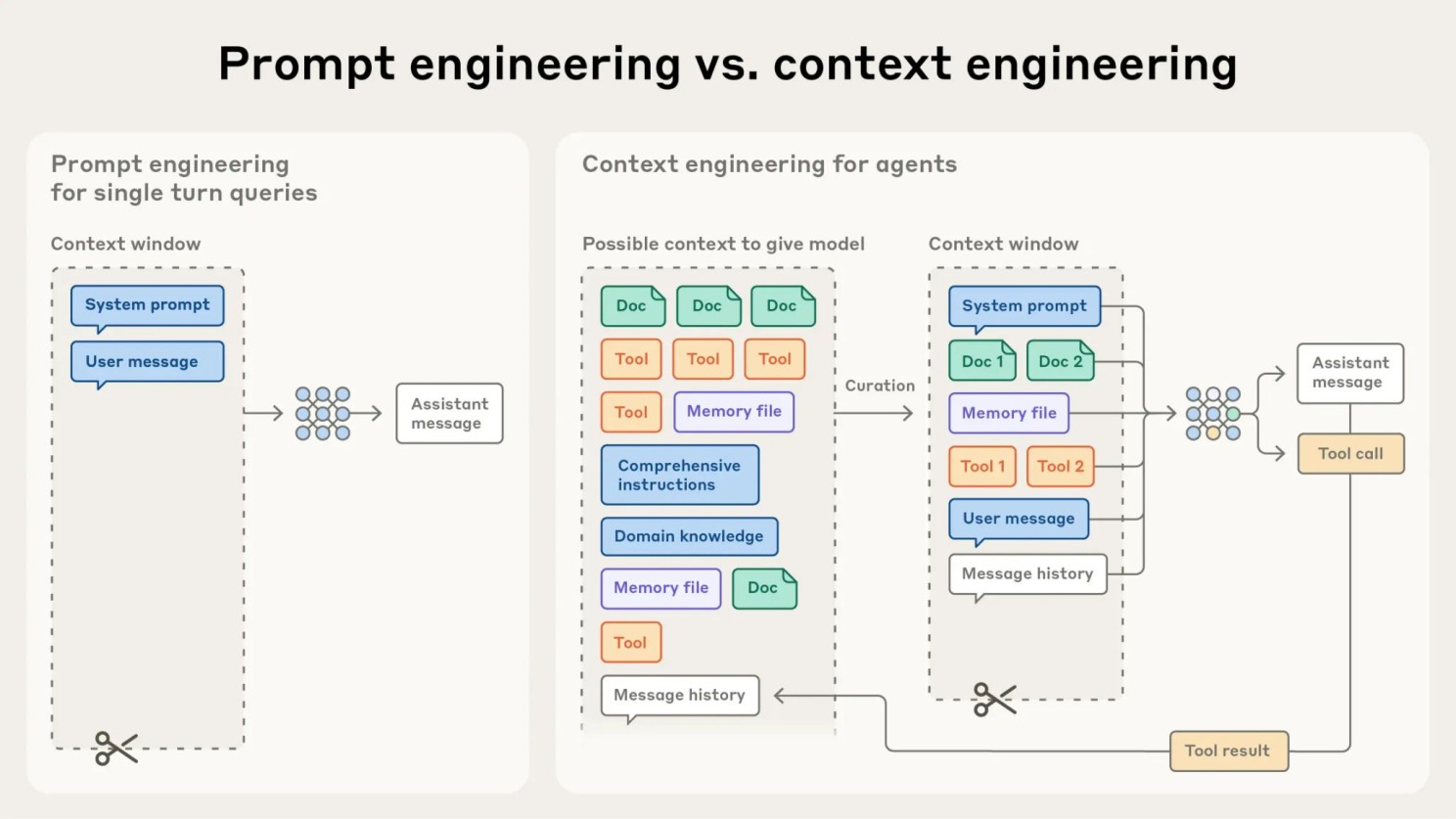

Anthropic describing the different techniques of "engineering"

“Prompt engineering” started as the first approach in making AI behave more reliably. Then we morphed to “context engineering,” realizing passing the most essential information to LLMs increases accuracy and predictability. Now, we are off to “agent engineering” that thinks about task break-downs, sub-agents, and tight feedback loops and evals to control reliability.

Sooner or later, we’ll be back to calling it “software engineering.” And there’s nothing wrong with that.