Using AI Language Models to Generate Fantasy Football Player Outlooks

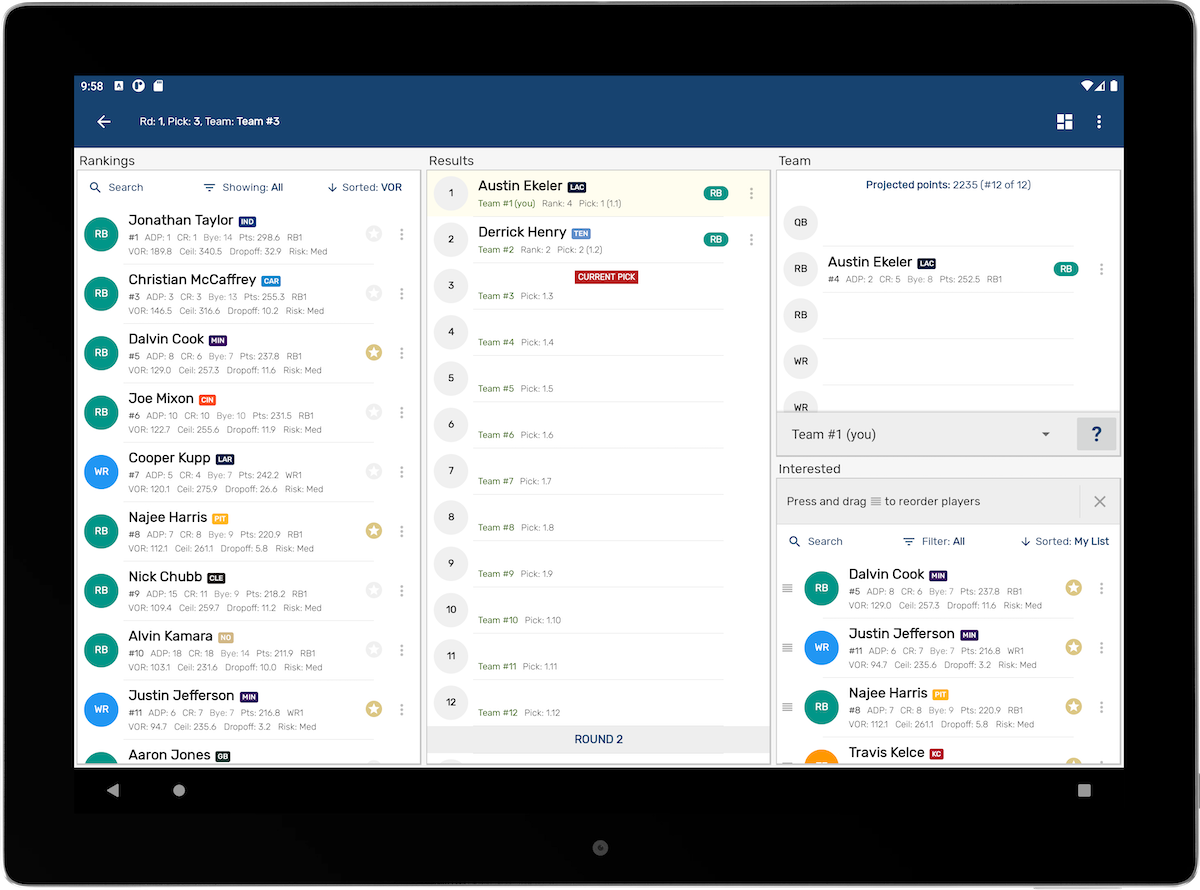

I’ve been heads-down on Draft Punk, my fantasy football drafting companion app, and this offseason I wanted the player pages to have season outlook writeups. The same that all the best analysts and beat writers create with context, nuance, and a clear “what to do” at draft time.

I already had a ton of data about the upcoming season. I had player info, past statistics, team schedules, team news, player news, and depth charts. And I generate player projections using wisdom of the crowd, player tiers, automatic sleepers/busts, draft recommendations, and even use Markov chains to predict availability odds.

But I needed a compelling narrative to stitch that all together. So I went exploring large language models.

The language models I tried

I found OpenAI and their GPT-3 models to be pretty far along on the research and capability of large language models. We had explored a project at Uber Eats to use conversational AI to receive food orders from phone calls, so I was familiar with the technology and wanted to put it to use.

-

text-davinci-002 Best long-form writer I tested. Good at following directions, keeping a consistent voice, and weaving facts into paragraphs. Slower and pricier, but the output was closest to “publishable.” (InstructGPT background)

- text-curie-001 Fast and cheap. Great for shorter tasks—headlines, bullet points, tier labels, and re-phrasing. It struggled with longer narratives without heavy scaffolding. (OpenAI API models overview)

-

text-babbage-001 / text-ada-001 Useful workhorses for lightweight tasks: classifying news blurbs as relevant/irrelevant, tagging injury notes, quick sentiment on coach quotes. (API reference)

- Embeddings (text-similarity-* and text-search-* families) Critical for grounding. I used these to index player projections, depth charts, recent news, schedule difficulty, team tendencies, and my Markov availability odds so the generator could pull the right facts before it wrote anything. (Embeddings guide)

You’ll see later, I ended up finding generating embeddings and fetching relevant chunks were sometimes overkill. I could simply pass various player info straight into the language model and got pretty good results

What I tried in the APIs

I did nearly everything through the Completions API and Embeddings API. A few patterns emerged.

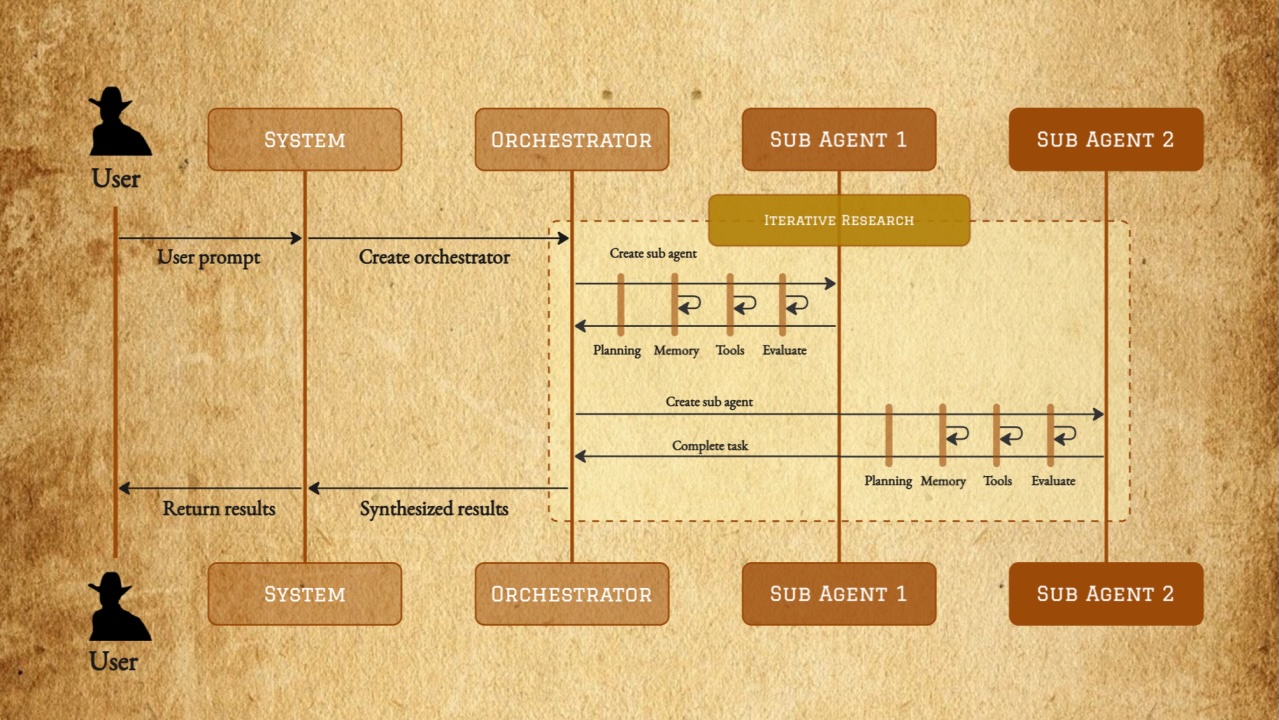

1. Retrieval-augmented generation (RAG) to keep it honest

LLMs are great at writing; they’re less great at being right by default. I built a tiny retrieval layer:

- Chunk my data into bite-sized passages per player (projections deltas, role notes, schedule flags, depth chart context, news summaries).

- Embed each chunk (

text-similarity-ada-001was the budget pick;*-curie-001gave a small relevance bump). - At write time, embed the prompt/question, grab the top-k chunks via cosine similarity, and stuff those facts into the prompt before generating.

This kept the model focused on my numbers and today’s situation. If you want the deeper theory, start with Retrieval-Augmented Generation by Lewis et al. (paper).

I did find the effort generating vector embeddings was overkill at times. Later I found simply passing along the formatted text in the prompt template fit within the context window, generated as good results as before, and frankly simplified the setup for this project.

The original Retrieval-Augmented Generation paper seemed to suggest vectors were critical for this technique. But given we are working with text in the prompt (which then gets encoded in the language model), I tried it and it was pretty good. My input context size was small enough to work. So I could see how trying to apply RAG on a larger data set would need that pre-processing.

2. Prompt scaffolding (templates + facts)

I learned quickly that “write a blurb about DK Metcalf” yields creative fiction. The fix was structure and specifics.

Example Prompt template:

You are a fantasy football analyst writing a 180–220 word season outlook report for the 2022 NFL season.

Voice: clear, direct, helpful. Avoid cliches.

FACTS (verbatim, may include numbers):

- Team: SEA. Role: X. Depth chart: Y.

- Projection (PPR): 85-1,050-9, WR12 consensus (range WR8–WR16).

- Average Draft Position (ADP): 67

- Schedule: Weeks 1–4 tough vs perimeter CBs; weeks 14–16 favorable.

- News (last 14d): hamstring tightness, limited two practices, no setback.

Constraints:

- Do not invent facts. If unknown, say "unclear as of June 2022."

- Cite at least three of the FACTS directly.

- End with a concise draft plan: "Round/price | Risk | Ceiling | Team fit".

Write the report.

Completions params I leaned on:

temperature: 0.3–0.5(steadier, less flowery)top_p: 0.9(aka nucleus sampling; see Holtzman et al. paper)max_tokens: 220–320per sectionstop: ["\n\n--", "END"]to prevent run-onspresence_penaltysmall nudge to avoid repetition for multi-paragraph outputs

For parameter details, see the OpenAI API reference.

3. Light post-processing and ranking

I occasionally generated two variants (e.g., one risk-forward, one opportunity-forward) and scored them against a checklist: number of facts used, coverage of projection range, mention of schedule, explicit draft guidance. The highest-scoring one shipped.

Combining generative text with pre-determined blocks

I was still struggling a bit controlling the quality and output of the generated reports. I did end up blending some pre-written sections with the AI response:

- “Usage & Role” – partially templated using depth chart and team tendency tags.

- “Injury/Availability” – templated sentence starters fed with any practice/injury notes.

- “Draft Plan” – templated rubric with round, risk, ceiling filled by the model but constrained to allowed phrases.

This hybrid kept tone consistent and let the model spend its creativity where it adds value: transitions, nuance, and connecting dots.

What worked, what didn’t

Worked

- Retrieval before generation: huge drop in hallucinations.

- Low temperature + explicit constraints: more concise, fewer cute metaphors.

- Shorter sections composed together: better than one giant 600-token ask.

- Curie/ada for classification and filtering tasks around the pipeline (cheap and fast).

Didn’t

- Letting the model summarize and opine and recommend in one pass.

- Overly clever prompts. Plain-language instructions beat flowery, lengthy prompts.

- Trusting the model with “latest news” without pinning a date (“as of June 2022”) and quoting the source snippet.

Lessons I’m taking forward

- Ground first, generate second. Retrieval isn’t optional if you care about accuracy.

- Shape the page, not just the paragraph. Break the problem into labeled sections with clear constraints.

- Use the right model for the job. Davinci for narrative, Curie/Ada for the plumbing.

- Keep it dated. “As of June 2022” prevents the model from time-traveling your facts.

- Expect to iterate. Prompt tuning is important. Small changes (tone, constraints, stop tokens) mattered more than fancy tricks.

I’m encouraged by how fast the field is moving. Even in my short tests I saw clear improvements across OpenAI’s releases. This technique will only get better and more accurate.

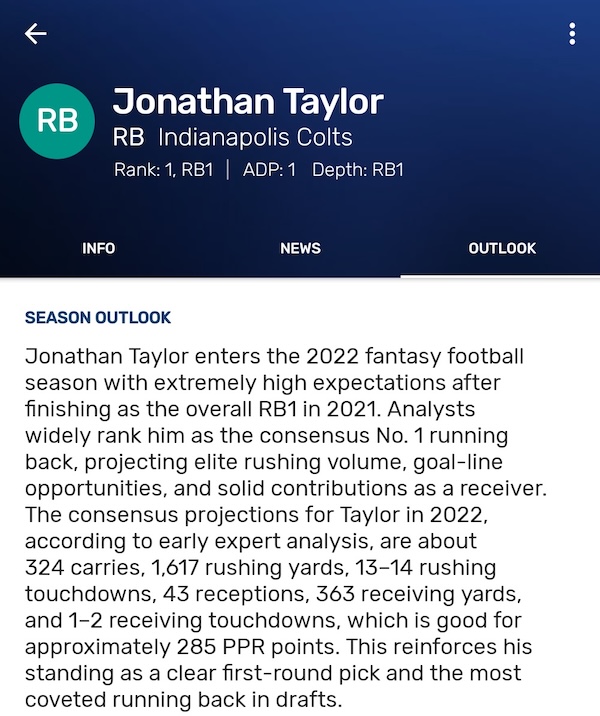

Give it a Try

Each player profile now blends my projections, schedule context, team/role signals, and fresh notes into a scouting report that reads like something you’d expect from a seasoned analyst.

If you want actionable, AI-assisted analysis with some of the most accurate projections in the industry, give Draft Punk a spin. Set your league settings, browse the player pages, and see how the reports line up with your gut. I think you’ll draft with more confidence. And have more fun doing it.